What’s AI good for anyway?

AI May Not Be a Reliable Clinician, But It Can Help Improve Your Practice

AI in wound medicine is no longer a question of “if.” The real question — the harder one — is “what does AI do well and what does it do poorly?”

The AMA’s 2026 Physician Survey on Augmented Intelligence tells the growing AI adoption story: 81% of physicians now report using AI in their practice, double the rate from just three years ago. The average physician uses 2.3 AI applications. Three-quarters say AI gives them an advantage in patient care.

But what are these clinicians actually using AI for? The real answer is less “sexy” than you might expect. Documentation and summarization dominate current use. Nearly 40% of physicians use AI to summarize research and standards of care — a 33-point jump since 2023.

Administrative and reimbursement tasks are another major area of opportunity for AI. Chart summaries, data extraction, discharge instructions, billing documentation, and patient portal response use cases are all growing rapidly. These are high-volume, time-intensive tasks where AI can reduce staff load without making complex clinical decisions.

The government and private payors are also arming themselves with AI, as WISeR and other payor programs use it to assess quality of claims for reimbursement. Auditors are increasingly using similar tools.

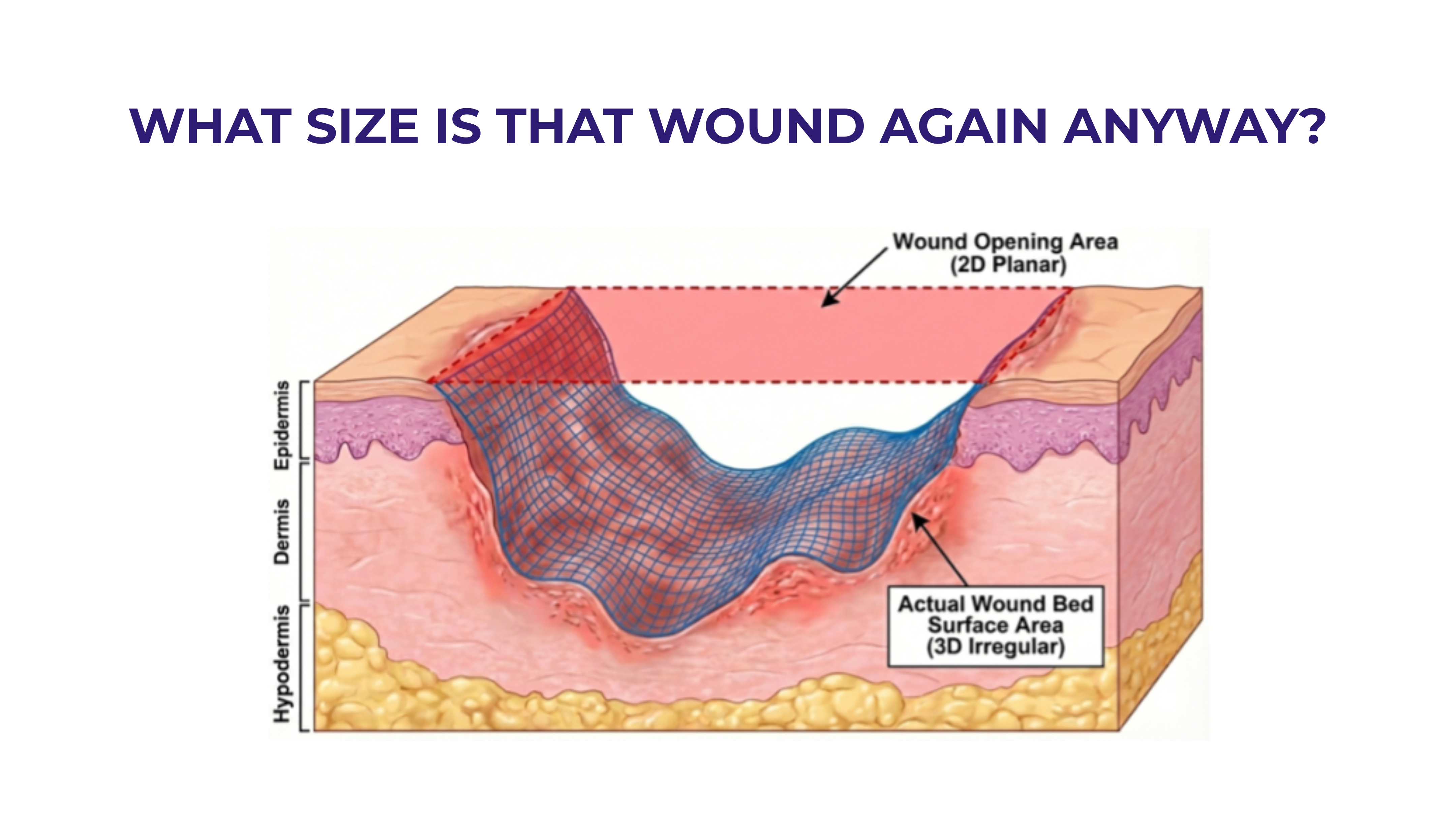

In wound care specifically, AI-assisted clinician support using visual AI tools is showing real promise. AI-powered wound measurement, tissue classification, and image-based monitoring tools like those from our friends at @Swift-Medical and @Mimosadiagnostics are making wound assessment more objective and consistent across care settings.

LLMs are also beginning to support wound care in ways that go beyond imaging. Symptom-checking chatbots can translate patient-reported descriptions into standardized clinical language. A number of tools can also use ambient listening and generative AI to automatically transcribe and document patient encounters in real-time.

In high-stakes clinical decision-making, however, it looks like AI is not quite ready for prime time. A February 2026 study in Nature Medicine stress-tested ChatGPT Health’s triage recommendations across 60 clinician-authored vignettes spanning 21 medical domains. The results followed an inverted U-shaped accuracy pattern: 93% accuracy for routine presentations, but performance collapsed at clinical extremes — 35% mistriage for nonurgent cases and 48% for emergencies. Among true emergencies, 51.6% were undertriaged, with the system directing patients experiencing diabetic ketoacidosis or impending respiratory failure to 24–48 hour evaluation rather than the ED (Ramaswamy et al., Nature Medicine, 2026).

The wound care space has its own version of this problem. When Corradini et al. (2025) benchmarked ChatGPT and Gemini against expert plastic surgeons for complex wound management, the LLMs achieved 60–75% full concordance with expert panels on management recommendations. Promising for education and triage support — but both models also made critical errors.

Where is AI heading in wound care?

Wound care sits at a particularly interesting inflection point. The specialty is documentation-heavy, relies on serial visual assessment, involves multidisciplinary teams, and manages patients across care transitions — all characteristics that play to AI’s current strengths in visual analysis and documentation efficiency. In other words, the growing use of AI in wound care makes a lot of sense.

But the future of AI in medicine — and wound care specifically — isn’t a single tool making clinical decisions. It’s an ecosystem of purpose-built applications, each validated for its specific use case, operating under clinician oversight. Think real-time wound monitoring through smart devices, paired with reimbursement, compliance and billing support tools that reduce administrative burden while improving care quality.

The wound care providers who will thrive in this environment aren’t the ones who adopt AI fastest. They’re the ones who adopt it most thoughtfully — choosing tools from trusted sources to perform tasks AI is good at, maintaining clinical oversight, and treating AI as infrastructure that makes their expertise more scalable, not less necessary.

The technology is moving fast. Will your practice be able to keep up? We would love to help you use AI most effectively, so reach out to us if you would like to learn more.

References

1. American Medical Association. 2026 Physician Survey on Augmented Intelligence. AMA Center for Digital Health and AI, March 2026.

2. Ramaswamy, A. et al. “ChatGPT Health performance in a structured test of triage recommendations.” Nature Medicine, February 2026. https://doi.org/10.1038/s41591-026-04297-7

3. Chang, D.-H. et al. “Artificial Intelligence-Assisted Clinical Decision Support System in Telemedical Wound Care: A Randomized Controlled Trial.” Annals of Plastic Surgery, 96(Supplement 2): S61–S66, February 2026.

4. Nelson, S., Lay, B., & Johnson, A.R. “Artificial Intelligence in Skin and Wound Care: Enhancing Diagnosis and Treatment with Large Language Models.” Advances in Skin & Wound Care, 38(9): 457–461, October 2025.

5. Zavaleta-Monestel, E., Arguedas-Chacón, S., & Cordero-Bermúdez, K. “Artificial intelligence for surgical data management and decision support: lessons from wound care.” Frontiers in Artificial Intelligence, 8:1718436, November 2025. https://doi.org/10.3389/frai.2025.1718436

6. Corradini, L. et al. “AI vs. MD: Benchmarking ChatGPT and Gemini for Complex Wound Management.” Journal of Clinical Medicine, 14: 8825, December 2025. https://doi.org/10.3390/jcm14248825

.svg)